Give the AI to Scientists...Just Scientists

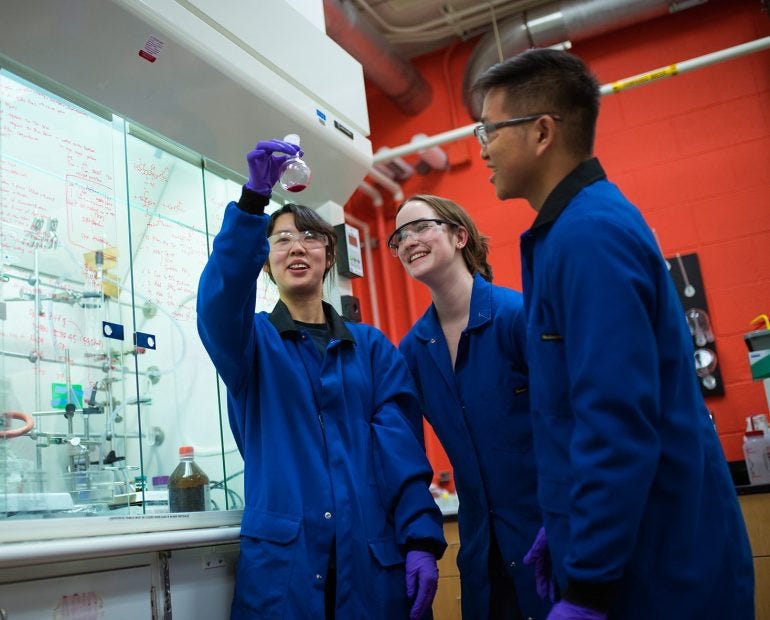

Stop it with the sexy chatbots and making everyone do ten times the work. Let's put AI toward scientific discovery. But can we keep scientists engaged (and employed) long enough to make it possible?

AI may soon unleash an enormous wave of discovery in labs and academic departments around the world. It’s the one place I feel somewhat good about its use (although my good feelings run aground pretty quickly, as I’ll explain later).

Early attempts at, for instance, mining existing scientific literature for new ideas are already underway. Researchers have built systems like SciMON (Scientific Inspiration Machines Optimized for Novelty), which can process enormous numbers of published papers and look for “inspiration” in them. But these systems aren’t quite there yet. Its creators write that although “our methods substantially improve the ability of LLMs in our task,” the ideas SciMON generates “fall far behind scientific papers in terms of novelty, depth and utility—raising fundamental challenges toward building models that generate scientific ideas.”

More focused use of LLMs and other AI tools could soon break through in specific areas, however. Certain pattern-recognition tasks, like predicting breast cancer in mammograms, are already done better by AI than they are by humans. Researchers have used LLMs to automate the design and execution of experiments in physics. Medical schools are finding that as a “thought partner,” LLMs can supercharge a physician’s reasoning.

And AI is, of course, making it vastly easier for humans to engage with the latest research on a topic. Stanford’s STORM system, which writes a custom research paper on the topic of your choosing, complete with detailed citations, is what I, as a science and technology journalist, have wanted my whole career. AI could even catch mistakes in research. After it was discovered that the paper which generated mass panic about the dangers of black spatulas contained a crucial arithmetic error, researchers launched a collective project to use OpenAI’s o1 reasoning model to spot errors in other papers.

But productivity is what researchers I speak with are hoping for. I was once reporting a story on the absorption by Big Tech of whole academic departments (and thereby both coopting their work and putting it out of the view of the public, including journalists). I met with a young computer scientist from one of the big tech firms, and asked him whether he’d ever want to go back to academia. “Why would I?” he answered. “In a university setting I have to go through a million hoops to study the behavior of maybe 500 people. Here I can look at millions of people’s data in a day.” Putting aside whether we feel Big Tech should have to obey the same rules around protecting human subjects that universities do (and holy geez do I think they should) what he clearly wanted was data, and speed. AI could make that possible inside public institutions.

A paper out of MIT suggests that AI can make scientists vastly more productive. In a study of more than a thousand researchers in the R&D lab of a large, unnamed US firm, the economist Aidan Toner-Rodgers looked at what the use of AI did to their work. The researchers in question were materials scientists, employed to chase new chemical compounds that might yield useful, patentable, profitable products. The lab, since 2022, has been using its own generative AI model, one that can reverse-engineer possible materials from a set of prompts, and the firm introduced the AI tool to its researchers in a randomized way, so researchers like Toner-Rodgers could compare those who did and didn’t use the tool. The impact for those who did use it was staggering:

AI-assisted scientists discover 44% more materials. These compounds possess superior properties, revealing that the model also improves quality. This influx of materials leads to a 39% increase in patent filings and, several months later, a 17% rise in product prototypes incorporating the new compounds.

But of course, market effects come for us all, and they’re already affecting the thousand-some privately employed scientists studied in the MIT paper. The productivity made possible by their materials-finding AI tool is, of course, a fantastic outcome. But a 44% increase in productivity puts more pressure on everyone’s time and performance, not less. Toner-Rodgers found that productivity was most pronounced in the top-performing scientists. Those at the bottom tended to waste time with poor search queries and dead-ended uses of the AI, while the top performers burned through their objectives in record time. Clearly a bifurcation is coming that it’s easy to see could eventually lead to a culling of those who don’t work well with the technology. And as I wrote last week, the people making these systems are in fact excited about that outcome.

Of course, science as a whole is under attack in this country. The NIH, ordered to cut 3,400 positions by Elon Musk’s DOGE according to a report last week, is still withholding federal research grants in defiance of a court order. Under the new Health and Human Services secretary, vaccine-denier and conspiracy theorist Robert F. Kennedy, Jr., the meetings that guide development of next year’s flu vaccine have been cancelled without explanation, after the worst flu season in 15 years.

And even if all of that weren’t happening, the unhappiness of working with AI may drive these researchers from the job before we ever get to that point. As Toner-Rodgers writes, “82% of researchers see an overall decline in satisfaction” once they begin using the AI tool — and that includes those who use it well and get enormous productivity out of it. As one researcher put it in a survey response, “While I was impressed by the performance of the [AI tool]...I couldn’t help feeling that much of my education is now worthless. This is not what I was trained to do.” This leads Toner-Rodgers to conclude

These results challenge the view that AI will primarily automate tedious tasks, allowing humans to focus on more rewarding activities (Mollick, 2023; McKendrick, 2024). Instead, the tool automates precisely the tasks that scientists find most interesting—creating ideas for new materials. This reflects a fundamental difference between AI and previous technologies. While earlier innovations excelled in routine, programmable tasks (Autor, 2014), deep learning models generate novel outputs by identifying patterns in their training data.

When we give the jobs we like best to AI, what’s left over is the stuff no one entered their field to do.

I once spent several days interviewing an expert in a very dark area of history: the degree to which societies punish certain crimes, and certain offenders, very differently. Donald Black of the University of Virginia has spent his career documenting what he calls the “gravity of law,” the rules by which legal punishment “falls” more heavily on people lower on the social hierarchy when they’ve committed a crime against someone who sits in a higher position. It’s a fascinating theory, one that Black has tried for decades to substantiate through documents ranging from Hammurabi’s original eye-for-an-eye legal treatise to modern laws that downgrade murder to manslaughter when the killing is a “crime of passion.” But when pressed, Black, like so many social scientists, archivists, and art historians before him, admits that he doesn’t have the army of researchers he’d need to truly comb the world’s libraries to quantitatively prove his theories. (I tell his story more fully in my book.)

Here’s my feeling: enough with the easy money. Enough with the easy layoffs. Let’s lend Black, and other tenured researchers like him, all the LLMs and processing power they want. Let them use AI to decode and trace the origins of ancient texts, to translate animal language, to warn renters that their new apartment might be tainted with lead. We can establish a redeeming use of AI, a category that gives scientists the horsepower they need to improve life for everyone. And we have to let them work in safety behind tenure. Protect them against the market forces that have VCs boasting that they can replace whole industries with AI and that have CEOs announcing they’re done with entire categories of hiring. (Also, let’s not let well-intentioned but dangerous research jump to the market, as I wrote about for The New York Times Magazine.) We want scientists and researchers to use AI for what its boosters say it’s for, the kind of world-improving uses that we so easily conflate with writing our wedding vows for us.

Give AI to scientists, and let them run with it.

Other Things I’m Looking At

Tech People Want to Run Your City

Want to live in a tech bro city full of experimental nuclear reactors, nanobots, and flying vehicles, without any regulatory oversight whatsoever? No? Well, too bad. “Several groups representing “startup nations”—tech hubs exempt from the taxes and regulations that apply to the countries where they are located—are drafting Congressional legislation to create “freedom cities” in the US that would be similarly free from certain federal laws,” according to Will Knight at Wired. The reporters over there are doing a tremendous job under Katie Drummond, and are perfectly placed to tackle the collision of Silicon Valley authoritarianism with Trump’s brand of it.

A Podcast that Won’t Stress You Out

I’ve been struggling to find media I can lose myself in that doesn’t make me anxious about the state of the world or fill me with self-loathing that I didn’t write/say/film it first. (My fatal flaw.) You Must Remember This is Karina Longworth’s passion-project-turned-chart-topper film podcast, a vast archive of stories about the tragedies and triumphs of Hollywood from its golden age to the 1990s. You think your job is stressful? You think no one appreciates the fullness of who you are? You think you have a drinking problem? These stories will help you gain perspective.

What Do We Think of Newsom’s Far-Right Interview Podcast?

On the one hand I think Newsom is a tremendous debater, and has walked unscathed out of media spaces no one else from his party can handle. On the other hand his first interview on his new show — with far-right podcaster Charlie Kirk, in which Newsom conceded some ground but Kirk did not — has generated intense, thoughtful criticism. Does he deserve it?

Tariffs Aren’t Going to Create an American Manufacturing Industry

At least not according to this American company owner, who tried to get his steel point-of-sale stations manufactured here. He says he’d rather just pay the tariffs, considering the terrible results he got from approaching U.S. companies. This is going to take a lot longer than a single administration.

The Most Concise Definition of Fascism I’ve Heard

As I posted on TikTok last week (and got a lot of fascinating feedback), it’s been hard to find a one-sentence definition of what it is. But a history teacher posting there described it fundamentally as a worldview, not a political ideology. He calls it “an allergy to abstract thought,” in which one’s concrete certainty about reality must be protected against any challenge, no matter how gentle or thoughtful, and regardless of whether that challenge leads to better outcomes. It blew my mind, and resonated with a lot of people. Here’s how one commenter on Substack put it in regards to his own family: